Commentary

Five Time Tested Lessons for Public Servants

Published

3 years agoon

By

admin

H.E. Justin Ho Guo Shun

(Special to the BINN)

The problem: Innovation in neurotechnology is running faster than the policy framework to oversee its use.

Why it matters: Data derived from neurotechnology devices creates new challenges for privacy, informed consent, data governance and identity.

The solution: Develop a new approach to oversight which supports innovation, manages risk and protects human rights.

Neurotechnology includes tools used to monitor, analyse and/or influence the human nervous system, in particular the brain. The UN has identified that a new set of rights should be recognized with a view to protecting a “person’s cerebral and mental domain”, which includes individual mental integrity and identity”.

The challenge from a public policy perspective is finding a balance between the current and future applications of these technologies and protecting customer rights, including ownership and use of data generated through neurotechnology. Moreover, implications for our identity if these devices alter our brain function, which in practice creates a state where we exhibit a new or different identity as has been reported in several neurotechnology recipients during clinical trials.

In general terms, there are two categories of neurotechnology; invasive, or implanted technology, which is regulated, and non-invasive, or wearable technology which is not regulated. From a medical risk management perspective, the process of implanting an invasive device and its use to either monitor or intervene is much higher than the medical risk of non-invasive and easily removable devices.

Implants may further be classified, both in terms of their likely accuracy, but also the degree of invasion determined by where the implant is located, ie within the brain, on the dura, under the scalp, on the head, or installed through blood vessels.

Wearable neurotechnology includes helmets, headbands, wristbands, watches or other devices which measure biomedical data. A vague policy area exists where customers may choose to have non-neurotechnology implants installed for convenience, such as a credit card chip being inserted in the user’s wrist, to enable payments for goods or services.

As an Investor, I attended a seminar with brain surgeons, medical scientists, university professors, inventors, and international law experts on the topic of ‘Neurotechnology and Law’. I participated with some scepticism, assuming there would be a call on the already over-stretched bureaucracy to regulate a realm of science fiction possibility. What I discovered is neurotechnology is here and now, including:

Brain implants trialled in Lou Gehrig’s disease patients which are returning thoughts to word processing connection with 96% accuracy. Some estimate we are three to five years away from a wearable device which would enable speech in brain injury patients.

Schools trialling wearable devices (helmets) which display red or green lights detecting the brain activity of students indicating whether they are paying attention in class.

Wearable wrist devices which enable electrical signals from the brain to operate a computer mouse with full functionality.

Brain implants which can control exoskeletons enabling paralysed patients to use their limbs.

Nanobots which can be steered around the brain to turn function on and off.

Implants, currently effective in mice, which enable infrared vision.

Given the current state of neurotechnology innovation, there is some urgency for the establishment of a policy framework to effectively protect customers. Starting with the question: how do we frame our right to mental privacy?

If we think about just a few of the services government undertakes, including law enforcement, education and health care, it’s easy to create “what if?” scenarios for government use of data derived via neurotechnology:

We could interpret brain data, leading to prioritisation or denial of certain services.

We could convict, or overturn convictions based on the ability to interpret thoughts and intentions regarding an individual who we suspect had committed a crime.

We could use neurotechnology to determine with acceptable accuracy whether someone is telling the truth.

We only paid our employees for hours where their brain function indicates they are actively engaged in their employment.

We could rank intelligence, in terms of education or prospective employment based on an individual’s ability to pay attention.

AI-derived advice is relied on, instead of informing decision-making.

We used neurotechnology to move beyond healthcare and the restoration of functions to applications of enhancement, leading to the creation of super-human abilities.

Recommendations

Policy and regulatory oversight needs to address the use of data collected from any neurotechnology, and must include effective mechanisms for informed consent. This would incorporate an understanding of risk factors, as well as data ownership and access rights, including access to data regarding our thoughts and behaviours, and by extension our identity.

The current regulatory environment does not recognise a hybrid model between a product and a service. The question of whether a person has ownership over a wearable device is much more straightforward than ownership of neurotechnology implanted inside a brain. A neurotechnology policy framework needs to recognise customer rights for both products and data as a service.

In a scenario where neurotechnology alters brain function, rights need to be established between the identity who received the implant, and the new personality or identity created through its use, who may have different legal responsibilities than the identity who received the implant.

The policy framework needs to also set out other consumer protections including:

Processes for what to do when neurotechnology fails or the managing company goes out of business.

Guardrails for determining the level of affect the neurotechnology may address before a new identity may be recognised.

Regulation with broad applicability, which addresses all (secondary) uses of a neurotechnology, not merely its ‘approved use’.

Criteria for evaluation of quality and success, neurotechnology has the potential to fix one issue for a customer but also generate others.

Policymakers need to start addressing the implications of neurotechnology on human rights, data protection and identity management, as consumer uptake of neurotechnology is running well ahead of the framework overseeing its use.

Pride Without Prejudice: CHR Calls for Stronger Protection of LGBTQIA+ Rights

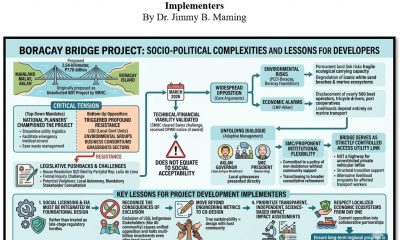

Why Boracay Bridge Welcomed by Oppositions: Lessons for Project Development Implementers?

San Marino Consulate Expands Education Efforts Through Partnerships for Filipino Youth

Why Carziqo’s Fleet Model Is Built for Sustained Earnings

WOMO Relaunches Affiliate and Creator Platform for Regulated Companies in the Philippines

DOLE, NIA Implement TUPAD for Farmers Belonging to Irrigators Association